A Collaborative Multi-Agent Reinforcement Learning Approach for Non-Stationary Environments with Unknown Change Points Article Swipe

YOU?

·

· 2025

· Open Access

·

· DOI: https://doi.org/10.3390/math13111738

· OA: W4410721624

YOU?

·

· 2025

· Open Access

·

· DOI: https://doi.org/10.3390/math13111738

· OA: W4410721624

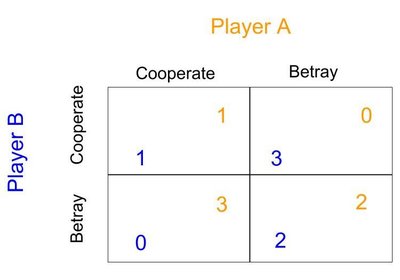

Reinforcement learning has achieved significant success in sequential decision-making problems but exhibits poor adaptability in non-stationary environments with unknown dynamics, a challenge particularly pronounced in multi-agent scenarios. This study aims to enhance the adaptive capability of multi-agent systems in such volatile environments. We propose a novel cooperative Multi-Agent Reinforcement Learning (MARL) algorithm based on MADDPG, termed MACPH, which innovatively incorporates three mechanisms: a Composite Experience Replay Buffer (CERB) mechanism that balances recent and important historical experiences through a dual-buffer structure and mixed sampling; an Adaptive Parameter Space Noise (APSN) mechanism that perturbs actor network parameters and dynamically adjusts the perturbation intensity to achieve coherent and state-dependent exploration; and a Huber loss function mechanism to mitigate the impact of outliers in Temporal Difference errors and enhance training stability. The study was conducted in standard and non-stationary navigation and communication task scenarios. Ablation studies confirmed the positive contributions of each component and their synergistic effects. In non-stationary scenarios featuring abrupt environmental changes, experiments demonstrate that MACPH outperforms baseline algorithms such as DDPG, MADDPG, and MATD3 in terms of reward performance, adaptation speed, learning stability, and robustness. The proposed MACPH algorithm offers an effective solution for multi-agent reinforcement learning applications in complex non-stationary environments.