Capacity Bounds for Networks with Correlated Sources and\n Characterisation of Distributions by Entropies Article Swipe

YOU?

·

· 2016

· Open Access

·

· DOI: https://doi.org/10.48550/arxiv.1607.02822

· OA: W4300896549

YOU?

·

· 2016

· Open Access

·

· DOI: https://doi.org/10.48550/arxiv.1607.02822

· OA: W4300896549

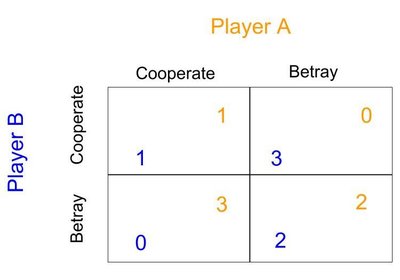

Characterising the capacity region for a network can be extremely difficult.\nEven with independent sources, determining the capacity region can be as hard\nas the open problem of characterising all information inequalities. The\nmajority of computable outer bounds in the literature are relaxations of the\nLinear Programming bound which involves entropy functions of random variables\nrelated to the sources and link messages. When sources are not independent, the\nproblem is even more complicated. Extension of Linear Programming bounds to\nnetworks with correlated sources is largely open. Source dependence is usually\nspecified via a joint probability distribution, and one of the main challenges\nin extending linear program bounds is the difficulty (or impossibility) of\ncharacterising arbitrary dependencies via entropy functions. This paper tackles\nthe problem by answering the question of how well entropy functions can\ncharacterise correlation among sources. We show that by using carefully chosen\nauxiliary random variables, the characterisation can be fairly "accurate" Using\nsuch auxiliary random variables we also give implicit and explicit outer bounds\non the capacity of networks with correlated sources. The characterisation of\ncorrelation or joint distribution via Shannon entropy functions is also\napplicable to other information measures such as Renyi entropy and Tsallis\nentropy.\n