Neural Architecture Search for Parameter-Efficient Fine-tuning of Large Pre-trained Language Models Article Swipe

Neal Lawton

,

Anoop Kumar

,

Govind Thattai

,

Aram Galstyan

,

Greg Ver Steeg

·

YOU?

·

· 2023

· Open Access

·

· DOI: https://doi.org/10.18653/v1/2023.findings-acl.539

YOU?

·

· 2023

· Open Access

·

· DOI: https://doi.org/10.18653/v1/2023.findings-acl.539

YOU?

·

· 2023

· Open Access

·

· DOI: https://doi.org/10.18653/v1/2023.findings-acl.539

YOU?

·

· 2023

· Open Access

·

· DOI: https://doi.org/10.18653/v1/2023.findings-acl.539

Parameter-efficient tuning (PET) methods fit pre-trained language models (PLMs) to downstream tasks by either computing a small compressed update for a subset of model parameters, or appending and fine-tuning a small number of new model parameters to the pre-trained network. Hand-designed PET architectures from the literature perform well in practice, but have the potential to be improved via automated neural architecture search (NAS). We propose an efficient NAS method for learning PET architectures via structured and unstructured pruning. We present experiments on GLUE demonstrating the effectiveness of our algorithm and discuss how PET architectural design choices affect performance in practice.

Related Topics

Concepts

Metadata

- Type

- article

- Language

- en

- Landing Page

- https://doi.org/10.18653/v1/2023.findings-acl.539

- https://aclanthology.org/2023.findings-acl.539.pdf

- OA Status

- gold

- Cited By

- 8

- References

- 39

- Related Works

- 10

- OpenAlex ID

- https://openalex.org/W4385570673

All OpenAlex metadata

Raw OpenAlex JSON

- OpenAlex ID

-

https://openalex.org/W4385570673Canonical identifier for this work in OpenAlex

- DOI

-

https://doi.org/10.18653/v1/2023.findings-acl.539Digital Object Identifier

- Title

-

Neural Architecture Search for Parameter-Efficient Fine-tuning of Large Pre-trained Language ModelsWork title

- Type

-

articleOpenAlex work type

- Language

-

enPrimary language

- Publication year

-

2023Year of publication

- Publication date

-

2023-01-01Full publication date if available

- Authors

-

Neal Lawton, Anoop Kumar, Govind Thattai, Aram Galstyan, Greg Ver SteegList of authors in order

- Landing page

-

https://doi.org/10.18653/v1/2023.findings-acl.539Publisher landing page

- PDF URL

-

https://aclanthology.org/2023.findings-acl.539.pdfDirect link to full text PDF

- Open access

-

YesWhether a free full text is available

- OA status

-

goldOpen access status per OpenAlex

- OA URL

-

https://aclanthology.org/2023.findings-acl.539.pdfDirect OA link when available

- Concepts

-

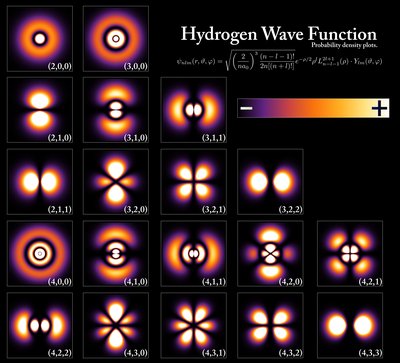

Computer science, Pruning, Language model, Architecture, Artificial intelligence, Artificial neural network, Machine learning, Fine-tuning, Visual arts, Biology, Art, Quantum mechanics, Agronomy, PhysicsTop concepts (fields/topics) attached by OpenAlex

- Cited by

-

8Total citation count in OpenAlex

- Citations by year (recent)

-

2025: 4, 2024: 1, 2023: 3Per-year citation counts (last 5 years)

- References (count)

-

39Number of works referenced by this work

- Related works (count)

-

10Other works algorithmically related by OpenAlex

Full payload

| id | https://openalex.org/W4385570673 |

|---|---|

| doi | https://doi.org/10.18653/v1/2023.findings-acl.539 |

| ids.doi | https://doi.org/10.18653/v1/2023.findings-acl.539 |

| ids.openalex | https://openalex.org/W4385570673 |

| fwci | 2.0435431 |

| type | article |

| title | Neural Architecture Search for Parameter-Efficient Fine-tuning of Large Pre-trained Language Models |

| biblio.issue | |

| biblio.volume | |

| biblio.last_page | 8515 |

| biblio.first_page | 8506 |

| topics[0].id | https://openalex.org/T10028 |

| topics[0].field.id | https://openalex.org/fields/17 |

| topics[0].field.display_name | Computer Science |

| topics[0].score | 0.9998999834060669 |

| topics[0].domain.id | https://openalex.org/domains/3 |

| topics[0].domain.display_name | Physical Sciences |

| topics[0].subfield.id | https://openalex.org/subfields/1702 |

| topics[0].subfield.display_name | Artificial Intelligence |

| topics[0].display_name | Topic Modeling |

| topics[1].id | https://openalex.org/T10181 |

| topics[1].field.id | https://openalex.org/fields/17 |

| topics[1].field.display_name | Computer Science |

| topics[1].score | 0.9994999766349792 |

| topics[1].domain.id | https://openalex.org/domains/3 |

| topics[1].domain.display_name | Physical Sciences |

| topics[1].subfield.id | https://openalex.org/subfields/1702 |

| topics[1].subfield.display_name | Artificial Intelligence |

| topics[1].display_name | Natural Language Processing Techniques |

| topics[2].id | https://openalex.org/T10201 |

| topics[2].field.id | https://openalex.org/fields/17 |

| topics[2].field.display_name | Computer Science |

| topics[2].score | 0.9950000047683716 |

| topics[2].domain.id | https://openalex.org/domains/3 |

| topics[2].domain.display_name | Physical Sciences |

| topics[2].subfield.id | https://openalex.org/subfields/1702 |

| topics[2].subfield.display_name | Artificial Intelligence |

| topics[2].display_name | Speech Recognition and Synthesis |

| is_xpac | False |

| apc_list | |

| apc_paid | |

| concepts[0].id | https://openalex.org/C41008148 |

| concepts[0].level | 0 |

| concepts[0].score | 0.829767107963562 |

| concepts[0].wikidata | https://www.wikidata.org/wiki/Q21198 |

| concepts[0].display_name | Computer science |

| concepts[1].id | https://openalex.org/C108010975 |

| concepts[1].level | 2 |

| concepts[1].score | 0.7423743009567261 |

| concepts[1].wikidata | https://www.wikidata.org/wiki/Q500094 |

| concepts[1].display_name | Pruning |

| concepts[2].id | https://openalex.org/C137293760 |

| concepts[2].level | 2 |

| concepts[2].score | 0.6298213005065918 |

| concepts[2].wikidata | https://www.wikidata.org/wiki/Q3621696 |

| concepts[2].display_name | Language model |

| concepts[3].id | https://openalex.org/C123657996 |

| concepts[3].level | 2 |

| concepts[3].score | 0.6253625750541687 |

| concepts[3].wikidata | https://www.wikidata.org/wiki/Q12271 |

| concepts[3].display_name | Architecture |

| concepts[4].id | https://openalex.org/C154945302 |

| concepts[4].level | 1 |

| concepts[4].score | 0.5626947283744812 |

| concepts[4].wikidata | https://www.wikidata.org/wiki/Q11660 |

| concepts[4].display_name | Artificial intelligence |

| concepts[5].id | https://openalex.org/C50644808 |

| concepts[5].level | 2 |

| concepts[5].score | 0.5295194983482361 |

| concepts[5].wikidata | https://www.wikidata.org/wiki/Q192776 |

| concepts[5].display_name | Artificial neural network |

| concepts[6].id | https://openalex.org/C119857082 |

| concepts[6].level | 1 |

| concepts[6].score | 0.46950244903564453 |

| concepts[6].wikidata | https://www.wikidata.org/wiki/Q2539 |

| concepts[6].display_name | Machine learning |

| concepts[7].id | https://openalex.org/C157524613 |

| concepts[7].level | 2 |

| concepts[7].score | 0.44637688994407654 |

| concepts[7].wikidata | https://www.wikidata.org/wiki/Q2828883 |

| concepts[7].display_name | Fine-tuning |

| concepts[8].id | https://openalex.org/C153349607 |

| concepts[8].level | 1 |

| concepts[8].score | 0.0 |

| concepts[8].wikidata | https://www.wikidata.org/wiki/Q36649 |

| concepts[8].display_name | Visual arts |

| concepts[9].id | https://openalex.org/C86803240 |

| concepts[9].level | 0 |

| concepts[9].score | 0.0 |

| concepts[9].wikidata | https://www.wikidata.org/wiki/Q420 |

| concepts[9].display_name | Biology |

| concepts[10].id | https://openalex.org/C142362112 |

| concepts[10].level | 0 |

| concepts[10].score | 0.0 |

| concepts[10].wikidata | https://www.wikidata.org/wiki/Q735 |

| concepts[10].display_name | Art |

| concepts[11].id | https://openalex.org/C62520636 |

| concepts[11].level | 1 |

| concepts[11].score | 0.0 |

| concepts[11].wikidata | https://www.wikidata.org/wiki/Q944 |

| concepts[11].display_name | Quantum mechanics |

| concepts[12].id | https://openalex.org/C6557445 |

| concepts[12].level | 1 |

| concepts[12].score | 0.0 |

| concepts[12].wikidata | https://www.wikidata.org/wiki/Q173113 |

| concepts[12].display_name | Agronomy |

| concepts[13].id | https://openalex.org/C121332964 |

| concepts[13].level | 0 |

| concepts[13].score | 0.0 |

| concepts[13].wikidata | https://www.wikidata.org/wiki/Q413 |

| concepts[13].display_name | Physics |

| keywords[0].id | https://openalex.org/keywords/computer-science |

| keywords[0].score | 0.829767107963562 |

| keywords[0].display_name | Computer science |

| keywords[1].id | https://openalex.org/keywords/pruning |

| keywords[1].score | 0.7423743009567261 |

| keywords[1].display_name | Pruning |

| keywords[2].id | https://openalex.org/keywords/language-model |

| keywords[2].score | 0.6298213005065918 |

| keywords[2].display_name | Language model |

| keywords[3].id | https://openalex.org/keywords/architecture |

| keywords[3].score | 0.6253625750541687 |

| keywords[3].display_name | Architecture |

| keywords[4].id | https://openalex.org/keywords/artificial-intelligence |

| keywords[4].score | 0.5626947283744812 |

| keywords[4].display_name | Artificial intelligence |

| keywords[5].id | https://openalex.org/keywords/artificial-neural-network |

| keywords[5].score | 0.5295194983482361 |

| keywords[5].display_name | Artificial neural network |

| keywords[6].id | https://openalex.org/keywords/machine-learning |

| keywords[6].score | 0.46950244903564453 |

| keywords[6].display_name | Machine learning |

| keywords[7].id | https://openalex.org/keywords/fine-tuning |

| keywords[7].score | 0.44637688994407654 |

| keywords[7].display_name | Fine-tuning |

| language | en |

| locations[0].id | doi:10.18653/v1/2023.findings-acl.539 |

| locations[0].is_oa | True |

| locations[0].source | |

| locations[0].license | cc-by |

| locations[0].pdf_url | https://aclanthology.org/2023.findings-acl.539.pdf |

| locations[0].version | publishedVersion |

| locations[0].raw_type | proceedings-article |

| locations[0].license_id | https://openalex.org/licenses/cc-by |

| locations[0].is_accepted | True |

| locations[0].is_published | True |

| locations[0].raw_source_name | Findings of the Association for Computational Linguistics: ACL 2023 |

| locations[0].landing_page_url | https://doi.org/10.18653/v1/2023.findings-acl.539 |

| indexed_in | crossref |

| authorships[0].author.id | https://openalex.org/A5060938182 |

| authorships[0].author.orcid | |

| authorships[0].author.display_name | Neal Lawton |

| authorships[0].affiliations[0].raw_affiliation_string | Information Sciences Institute |

| authorships[0].author_position | first |

| authorships[0].raw_author_name | Neal Lawton |

| authorships[0].is_corresponding | False |

| authorships[0].raw_affiliation_strings | Information Sciences Institute |

| authorships[1].author.id | https://openalex.org/A5085371439 |

| authorships[1].author.orcid | https://orcid.org/0000-0002-7806-9986 |

| authorships[1].author.display_name | Anoop Kumar |

| authorships[1].countries | US |

| authorships[1].affiliations[0].institution_ids | https://openalex.org/I1311688040 |

| authorships[1].affiliations[0].raw_affiliation_string | Amazon Alexa AI |

| authorships[1].institutions[0].id | https://openalex.org/I1311688040 |

| authorships[1].institutions[0].ror | https://ror.org/04mv4n011 |

| authorships[1].institutions[0].type | company |

| authorships[1].institutions[0].lineage | https://openalex.org/I1311688040 |

| authorships[1].institutions[0].country_code | US |

| authorships[1].institutions[0].display_name | Amazon (United States) |

| authorships[1].author_position | middle |

| authorships[1].raw_author_name | Anoop Kumar |

| authorships[1].is_corresponding | False |

| authorships[1].raw_affiliation_strings | Amazon Alexa AI |

| authorships[2].author.id | https://openalex.org/A5088771920 |

| authorships[2].author.orcid | https://orcid.org/0009-0005-1010-8896 |

| authorships[2].author.display_name | Govind Thattai |

| authorships[2].countries | US |

| authorships[2].affiliations[0].institution_ids | https://openalex.org/I1311688040 |

| authorships[2].affiliations[0].raw_affiliation_string | Amazon Alexa AI |

| authorships[2].institutions[0].id | https://openalex.org/I1311688040 |

| authorships[2].institutions[0].ror | https://ror.org/04mv4n011 |

| authorships[2].institutions[0].type | company |

| authorships[2].institutions[0].lineage | https://openalex.org/I1311688040 |

| authorships[2].institutions[0].country_code | US |

| authorships[2].institutions[0].display_name | Amazon (United States) |

| authorships[2].author_position | middle |

| authorships[2].raw_author_name | Govind Thattai |

| authorships[2].is_corresponding | False |

| authorships[2].raw_affiliation_strings | Amazon Alexa AI |

| authorships[3].author.id | https://openalex.org/A5101715504 |

| authorships[3].author.orcid | https://orcid.org/0000-0003-4215-0886 |

| authorships[3].author.display_name | Aram Galstyan |

| authorships[3].countries | US |

| authorships[3].affiliations[0].institution_ids | https://openalex.org/I1311688040 |

| authorships[3].affiliations[0].raw_affiliation_string | Amazon Alexa AI |

| authorships[3].institutions[0].id | https://openalex.org/I1311688040 |

| authorships[3].institutions[0].ror | https://ror.org/04mv4n011 |

| authorships[3].institutions[0].type | company |

| authorships[3].institutions[0].lineage | https://openalex.org/I1311688040 |

| authorships[3].institutions[0].country_code | US |

| authorships[3].institutions[0].display_name | Amazon (United States) |

| authorships[3].author_position | middle |

| authorships[3].raw_author_name | Aram Galstyan |

| authorships[3].is_corresponding | False |

| authorships[3].raw_affiliation_strings | Amazon Alexa AI |

| authorships[4].author.id | https://openalex.org/A5075920466 |

| authorships[4].author.orcid | https://orcid.org/0000-0002-0793-141X |

| authorships[4].author.display_name | Greg Ver Steeg |

| authorships[4].countries | US |

| authorships[4].affiliations[0].institution_ids | https://openalex.org/I1311688040 |

| authorships[4].affiliations[0].raw_affiliation_string | Amazon Alexa AI |

| authorships[4].institutions[0].id | https://openalex.org/I1311688040 |

| authorships[4].institutions[0].ror | https://ror.org/04mv4n011 |

| authorships[4].institutions[0].type | company |

| authorships[4].institutions[0].lineage | https://openalex.org/I1311688040 |

| authorships[4].institutions[0].country_code | US |

| authorships[4].institutions[0].display_name | Amazon (United States) |

| authorships[4].author_position | last |

| authorships[4].raw_author_name | Greg Ver Steeg |

| authorships[4].is_corresponding | False |

| authorships[4].raw_affiliation_strings | Amazon Alexa AI |

| has_content.pdf | True |

| has_content.grobid_xml | True |

| is_paratext | False |

| open_access.is_oa | True |

| open_access.oa_url | https://aclanthology.org/2023.findings-acl.539.pdf |

| open_access.oa_status | gold |

| open_access.any_repository_has_fulltext | False |

| created_date | 2025-10-10T00:00:00 |

| display_name | Neural Architecture Search for Parameter-Efficient Fine-tuning of Large Pre-trained Language Models |

| has_fulltext | True |

| is_retracted | False |

| updated_date | 2025-11-06T03:46:38.306776 |

| primary_topic.id | https://openalex.org/T10028 |

| primary_topic.field.id | https://openalex.org/fields/17 |

| primary_topic.field.display_name | Computer Science |

| primary_topic.score | 0.9998999834060669 |

| primary_topic.domain.id | https://openalex.org/domains/3 |

| primary_topic.domain.display_name | Physical Sciences |

| primary_topic.subfield.id | https://openalex.org/subfields/1702 |

| primary_topic.subfield.display_name | Artificial Intelligence |

| primary_topic.display_name | Topic Modeling |

| related_works | https://openalex.org/W2373300491, https://openalex.org/W2378744544, https://openalex.org/W2594301978, https://openalex.org/W2379704676, https://openalex.org/W1998810860, https://openalex.org/W3023285645, https://openalex.org/W3037551068, https://openalex.org/W3023594376, https://openalex.org/W4287802662, https://openalex.org/W4309877123 |

| cited_by_count | 8 |

| counts_by_year[0].year | 2025 |

| counts_by_year[0].cited_by_count | 4 |

| counts_by_year[1].year | 2024 |

| counts_by_year[1].cited_by_count | 1 |

| counts_by_year[2].year | 2023 |

| counts_by_year[2].cited_by_count | 3 |

| locations_count | 1 |

| best_oa_location.id | doi:10.18653/v1/2023.findings-acl.539 |

| best_oa_location.is_oa | True |

| best_oa_location.source | |

| best_oa_location.license | cc-by |

| best_oa_location.pdf_url | https://aclanthology.org/2023.findings-acl.539.pdf |

| best_oa_location.version | publishedVersion |

| best_oa_location.raw_type | proceedings-article |

| best_oa_location.license_id | https://openalex.org/licenses/cc-by |

| best_oa_location.is_accepted | True |

| best_oa_location.is_published | True |

| best_oa_location.raw_source_name | Findings of the Association for Computational Linguistics: ACL 2023 |

| best_oa_location.landing_page_url | https://doi.org/10.18653/v1/2023.findings-acl.539 |

| primary_location.id | doi:10.18653/v1/2023.findings-acl.539 |

| primary_location.is_oa | True |

| primary_location.source | |

| primary_location.license | cc-by |

| primary_location.pdf_url | https://aclanthology.org/2023.findings-acl.539.pdf |

| primary_location.version | publishedVersion |

| primary_location.raw_type | proceedings-article |

| primary_location.license_id | https://openalex.org/licenses/cc-by |

| primary_location.is_accepted | True |

| primary_location.is_published | True |

| primary_location.raw_source_name | Findings of the Association for Computational Linguistics: ACL 2023 |

| primary_location.landing_page_url | https://doi.org/10.18653/v1/2023.findings-acl.539 |

| publication_date | 2023-01-01 |

| publication_year | 2023 |

| referenced_works | https://openalex.org/W2946794439, https://openalex.org/W4286981949, https://openalex.org/W2964303773, https://openalex.org/W4287122891, https://openalex.org/W2707890836, https://openalex.org/W2951104886, https://openalex.org/W3176828726, https://openalex.org/W4287391717, https://openalex.org/W3153675281, https://openalex.org/W4206281850, https://openalex.org/W3205949070, https://openalex.org/W2805003733, https://openalex.org/W3099793224, https://openalex.org/W3034199299, https://openalex.org/W3103616906, https://openalex.org/W3098267758, https://openalex.org/W3101498587, https://openalex.org/W4294925020, https://openalex.org/W2923014074, https://openalex.org/W4385573610, https://openalex.org/W4205991051, https://openalex.org/W3174784402, https://openalex.org/W1677182931, https://openalex.org/W3174702398, https://openalex.org/W4292779060, https://openalex.org/W3168867926, https://openalex.org/W1522301498, https://openalex.org/W2963211188, https://openalex.org/W3105966348, https://openalex.org/W2978017171, https://openalex.org/W3202099651, https://openalex.org/W2896457183, https://openalex.org/W2970352191, https://openalex.org/W3015233032, https://openalex.org/W3174770825, https://openalex.org/W2965373594, https://openalex.org/W2970925270, https://openalex.org/W3034457371, https://openalex.org/W3103368673 |

| referenced_works_count | 39 |

| abstract_inverted_index.a | 15, 20, 29 |

| abstract_inverted_index.We | 63, 78 |

| abstract_inverted_index.an | 65 |

| abstract_inverted_index.be | 55 |

| abstract_inverted_index.by | 12 |

| abstract_inverted_index.in | 48, 98 |

| abstract_inverted_index.of | 22, 32, 86 |

| abstract_inverted_index.on | 81 |

| abstract_inverted_index.or | 25 |

| abstract_inverted_index.to | 9, 36, 54 |

| abstract_inverted_index.NAS | 67 |

| abstract_inverted_index.PET | 41, 71, 92 |

| abstract_inverted_index.and | 27, 75, 89 |

| abstract_inverted_index.but | 50 |

| abstract_inverted_index.fit | 4 |

| abstract_inverted_index.for | 19, 69 |

| abstract_inverted_index.how | 91 |

| abstract_inverted_index.new | 33 |

| abstract_inverted_index.our | 87 |

| abstract_inverted_index.the | 37, 44, 52, 84 |

| abstract_inverted_index.via | 57, 73 |

| abstract_inverted_index.GLUE | 82 |

| abstract_inverted_index.from | 43 |

| abstract_inverted_index.have | 51 |

| abstract_inverted_index.well | 47 |

| abstract_inverted_index.(PET) | 2 |

| abstract_inverted_index.model | 23, 34 |

| abstract_inverted_index.small | 16, 30 |

| abstract_inverted_index.tasks | 11 |

| abstract_inverted_index.(NAS). | 62 |

| abstract_inverted_index.(PLMs) | 8 |

| abstract_inverted_index.affect | 96 |

| abstract_inverted_index.design | 94 |

| abstract_inverted_index.either | 13 |

| abstract_inverted_index.method | 68 |

| abstract_inverted_index.models | 7 |

| abstract_inverted_index.neural | 59 |

| abstract_inverted_index.number | 31 |

| abstract_inverted_index.search | 61 |

| abstract_inverted_index.subset | 21 |

| abstract_inverted_index.tuning | 1 |

| abstract_inverted_index.update | 18 |

| abstract_inverted_index.choices | 95 |

| abstract_inverted_index.discuss | 90 |

| abstract_inverted_index.methods | 3 |

| abstract_inverted_index.perform | 46 |

| abstract_inverted_index.present | 79 |

| abstract_inverted_index.propose | 64 |

| abstract_inverted_index.improved | 56 |

| abstract_inverted_index.language | 6 |

| abstract_inverted_index.learning | 70 |

| abstract_inverted_index.network. | 39 |

| abstract_inverted_index.pruning. | 77 |

| abstract_inverted_index.algorithm | 88 |

| abstract_inverted_index.appending | 26 |

| abstract_inverted_index.automated | 58 |

| abstract_inverted_index.computing | 14 |

| abstract_inverted_index.efficient | 66 |

| abstract_inverted_index.potential | 53 |

| abstract_inverted_index.practice, | 49 |

| abstract_inverted_index.practice. | 99 |

| abstract_inverted_index.compressed | 17 |

| abstract_inverted_index.downstream | 10 |

| abstract_inverted_index.literature | 45 |

| abstract_inverted_index.parameters | 35 |

| abstract_inverted_index.structured | 74 |

| abstract_inverted_index.experiments | 80 |

| abstract_inverted_index.fine-tuning | 28 |

| abstract_inverted_index.parameters, | 24 |

| abstract_inverted_index.performance | 97 |

| abstract_inverted_index.pre-trained | 5, 38 |

| abstract_inverted_index.architecture | 60 |

| abstract_inverted_index.unstructured | 76 |

| abstract_inverted_index.Hand-designed | 40 |

| abstract_inverted_index.architectural | 93 |

| abstract_inverted_index.architectures | 42, 72 |

| abstract_inverted_index.demonstrating | 83 |

| abstract_inverted_index.effectiveness | 85 |

| abstract_inverted_index.Parameter-efficient | 0 |

| cited_by_percentile_year.max | 98 |

| cited_by_percentile_year.min | 90 |

| countries_distinct_count | 1 |

| institutions_distinct_count | 5 |

| citation_normalized_percentile.value | 0.86864779 |

| citation_normalized_percentile.is_in_top_1_percent | False |

| citation_normalized_percentile.is_in_top_10_percent | False |