Optimal Multi-Distribution Learning Article Swipe

YOU?

·

· 2025

· Open Access

·

· DOI: https://doi.org/10.1145/3760256

· OA: W4413602204

YOU?

·

· 2025

· Open Access

·

· DOI: https://doi.org/10.1145/3760256

· OA: W4413602204

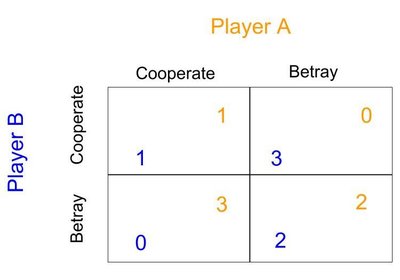

Multi-distribution learning (MDL), which seeks to learn a shared model that minimizes the worst-case risk across k distinct data distributions, has emerged as a unified framework in response to the evolving demand for robustness, fairness, multi-group collaboration, and so on. Achieving data-efficient MDL necessitates adaptive sampling, also called on-demand sampling, throughout the learning process. However, there exist substantial gaps between the state-of-the-art upper and lower bounds on the optimal sample complexity. Focusing on a hypothesis class of Vapnik–Chervonenkis (VC) dimension d , we propose a novel algorithm that yields an ɛ-optimal randomized hypothesis with a sample complexity on the order of \(\frac{d+k}{\varepsilon ^2}\) (modulo some logarithmic factor), matching the best-known lower bound. Our algorithmic ideas and theory are further extended to accommodate Rademacher classes. The proposed algorithms are oracle-efficient, which access the hypothesis class solely through an empirical risk minimization oracle. Additionally, we establish the necessity of improper learning, revealing a large sample size barrier when only deterministic, proper hypotheses are permitted. These findings resolve three open problems presented in COLT 2023 (i.e., Awasthi et al. [ 4 , Problems 1, 3, and 4]).