Feedforward Sequential Memory Neural Networks without Recurrent Feedback Article Swipe

Shiliang Zhang

,

Hui Jiang

,

Si Wei

,

Li-Rong Dai

·

YOU?

·

· 2015

· Open Access

·

· DOI: https://doi.org/10.48550/arxiv.1510.02693

YOU?

·

· 2015

· Open Access

·

· DOI: https://doi.org/10.48550/arxiv.1510.02693

YOU?

·

· 2015

· Open Access

·

· DOI: https://doi.org/10.48550/arxiv.1510.02693

YOU?

·

· 2015

· Open Access

·

· DOI: https://doi.org/10.48550/arxiv.1510.02693

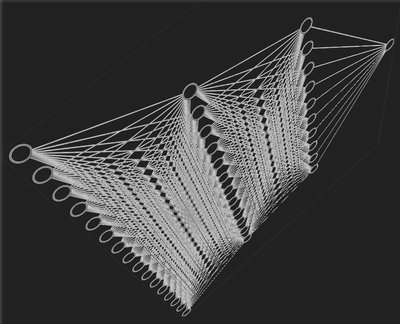

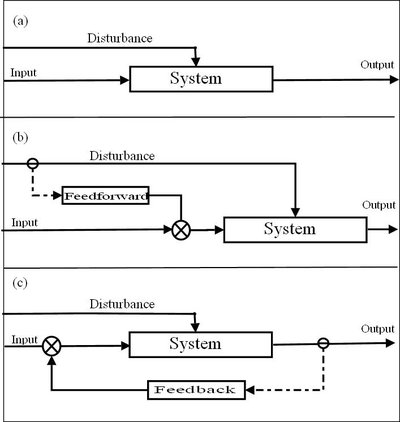

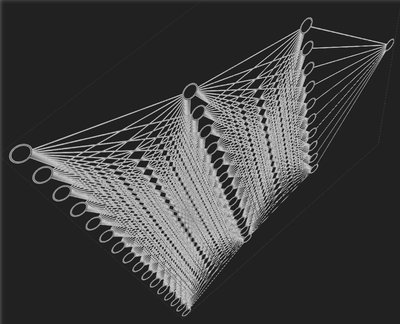

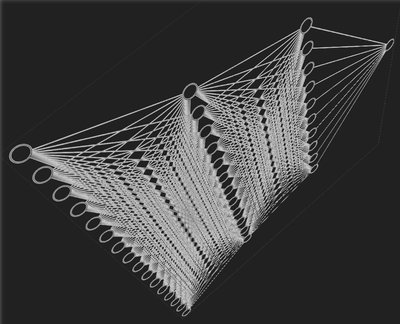

We introduce a new structure for memory neural networks, called feedforward sequential memory networks (FSMN), which can learn long-term dependency without using recurrent feedback. The proposed FSMN is a standard feedforward neural networks equipped with learnable sequential memory blocks in the hidden layers. In this work, we have applied FSMN to several language modeling (LM) tasks. Experimental results have shown that the memory blocks in FSMN can learn effective representations of long history. Experiments have shown that FSMN based language models can significantly outperform not only feedforward neural network (FNN) based LMs but also the popular recurrent neural network (RNN) LMs.

Related Topics To Compare & Contrast

Vs

Engineering

Concepts

Recurrent neural network

Feed forward

Computer science

Feedforward neural network

Artificial neural network

Time delay neural network

Dependency (UML)

Artificial intelligence

Long short term memory

Control engineering

Engineering

Metadata

- Type

- preprint

- Language

- en

- Landing Page

- http://arxiv.org/abs/1510.02693

- https://arxiv.org/pdf/1510.02693

- OA Status

- green

- Cited By

- 20

- References

- 17

- Related Works

- 10

- OpenAlex ID

- https://openalex.org/W1920942766

All OpenAlex metadata

Raw OpenAlex JSON

No additional metadata available.