MAMA: Meta-optimized Angular Margin Contrastive Framework for Video-Language Representation Learning Article Swipe

YOU?

·

· 2024

· Open Access

·

· DOI: https://doi.org/10.48550/arxiv.2407.03788

YOU?

·

· 2024

· Open Access

·

· DOI: https://doi.org/10.48550/arxiv.2407.03788

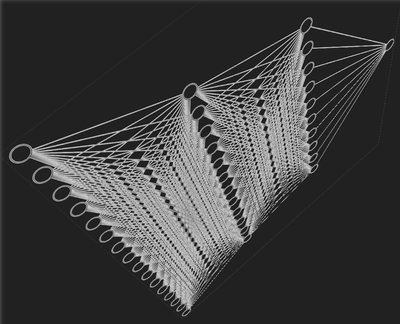

Data quality stands at the forefront of deciding the effectiveness of video-language representation learning. However, video-text pairs in previous data typically do not align perfectly with each other, which might lead to video-language representations that do not accurately reflect cross-modal semantics. Moreover, previous data also possess an uneven distribution of concepts, thereby hampering the downstream performance across unpopular subjects. To address these problems, we propose MAMA, a new approach to learning video-language representations by utilizing a contrastive objective with a subtractive angular margin to regularize cross-modal representations in their effort to reach perfect similarity. Furthermore, to adapt to the non-uniform concept distribution, MAMA utilizes a multi-layer perceptron (MLP)-parameterized weighting function that maps loss values to sample weights which enable dynamic adjustment of the model's focus throughout the training. With the training guided by a small amount of unbiased meta-data and augmented by video-text data generated by large vision-language model, MAMA improves video-language representations and achieve superior performances on commonly used video question answering and text-video retrieval datasets. The code, model, and data have been made available at https://nguyentthong.github.io/MAMA.

Related Topics To Compare & Contrast

- Type

- preprint

- Language

- en

- Landing Page

- http://arxiv.org/abs/2407.03788

- https://arxiv.org/pdf/2407.03788

- OA Status

- green

- Related Works

- 10

- OpenAlex ID

- https://openalex.org/W4400434033